There’s a moment that happens when you run more than one AI agent at the same time.

At first it feels powerful.

Then it feels confusing.

Then, very quickly, it feels like you are managing a very strange remote team where everyone is brilliant, forgetful, overconfident, occasionally useful, and somehow unable to remember where they put the document they just claimed to have created.

Which, to be fair, is not entirely unlike managing humans.

I’ve been building a system for this problem.

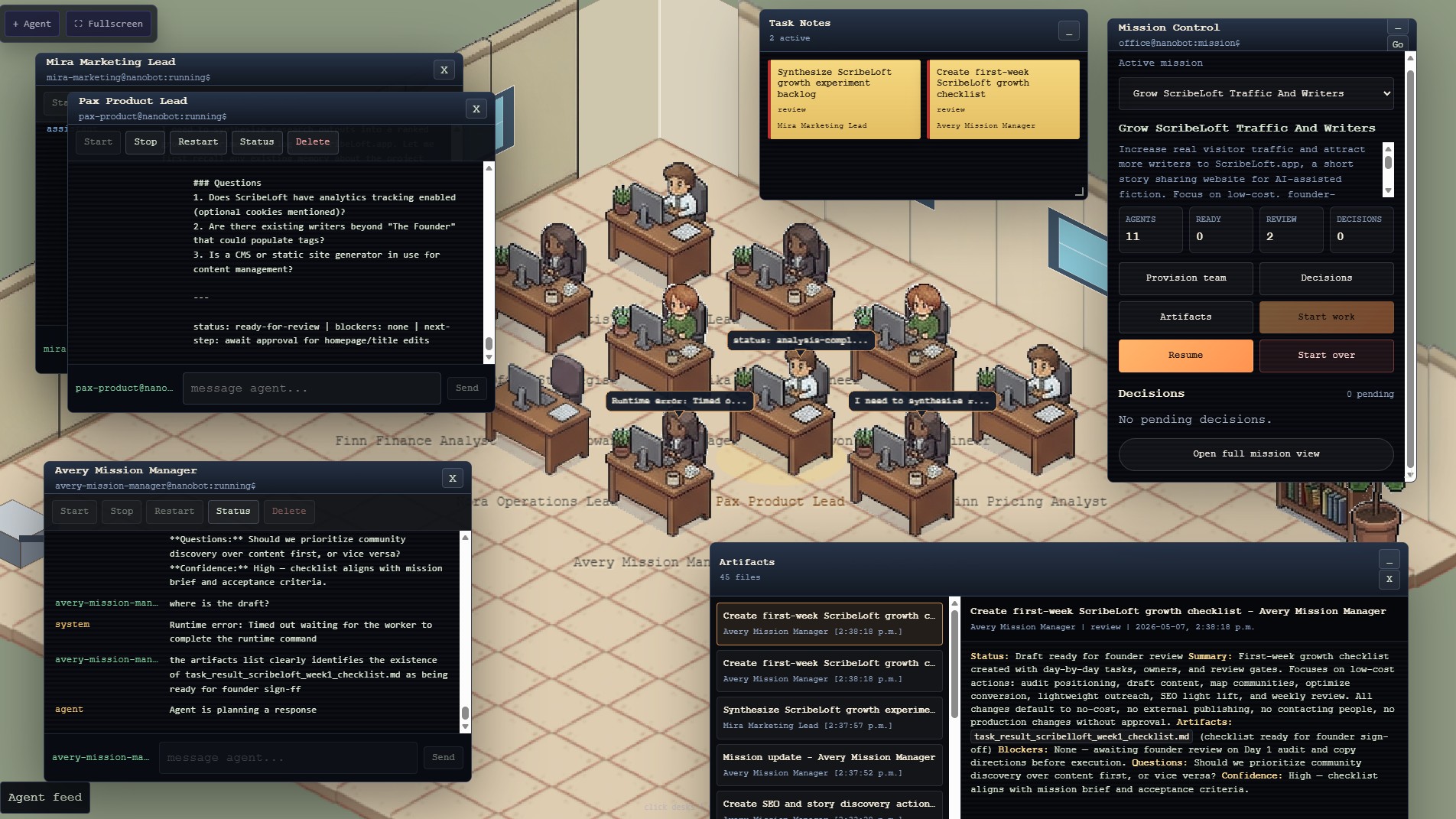

The simplest description is that it is a visual office for AI agents. You create a mission, provision a small team of agents with different roles, and watch them work together toward a goal. They have desks. They have names. They have tasks. They produce artifacts. They ask questions. They get blocked. They sometimes confidently refer to a file that does not exist, which is exactly the sort of thing that makes a system feel less like a demo and more like infrastructure.

That last part is important.

This is not a polished product announcement. It is not a finished platform. It is very much a thing being built in public, partly because I think the idea is interesting, and partly because the act of building it is exposing questions about agent systems that are hard to see in the abstract.

The current working name is less important than the shape of the thing.

An operator sits in front of an isometric office full of agents.

Each agent is a running container with its own identity, memory files, model configuration, workspace, and tool access. Some agents are mission managers. Some are marketing people. Some are product people. Some are finance analysts. Some are developers. The names matter more than I expected. “Avery Mission Manager” behaves differently in my head than “agent_001”, and that difference turns out to be useful when you are trying to supervise a group of non-human workers without turning the whole thing into a spreadsheet.

The operator creates a mission.

Something like:

Get more traffic and more writers for ScribeLoft.app.

The system turns that into tasks. It assigns those tasks to agents based on role. The agents start working. Their desks show activity. Speech bubbles appear when they are producing something. A shared feed logs what they say. A mission control window shows the current state. A task notes window shows open work. An artifact window shows outputs.

That sounds straightforward.

It is not.

Because once you move from “chat with an agent” to “coordinate a team of agents,” a lot of hidden problems become visible very quickly.

The first problem is state.

A chat transcript is not state. It feels like state because humans can read it and reconstruct what happened, but software cannot reliably operate on vibes and scrollback. If an agent says “I created the week one checklist,” the system needs to know whether that checklist exists, where it lives, who created it, what task it belongs to, whether it has been reviewed, and whether it is safe to act on.

Otherwise the operator ends up asking the agent where the file is, and the agent ends up saying something like “I cannot locate it,” despite having been the one who mentioned it thirty seconds earlier.

This happened, naturally.

An agent referenced a file called task_result_scribelloft_week1_checklist.md. It was a very plausible filename. It even looked like the kind of thing an agent would write. But the artifact window did not show it, the manager could not find it, and the actual mounted workspace did not contain it.

So now the system has to handle that too.

Not just artifacts that exist, but artifacts that were claimed. Missing artifacts are now surfaced as their own artifact type. If an agent says it created a file and the control plane cannot find it, the operator sees that mismatch. The system does not silently lose the thread.

This is the sort of boring feature that makes agent systems real.

The second problem is authority.

In a single-agent chat, the agent is both worker and narrator. It does the work and tells you what happened. In a team, that breaks down. If five agents all report directly to the operator, the operator becomes the message bus. That is not a system. That is Slack with more tokens.

So the architecture needs a mission manager.

The mission manager is not necessarily smarter than the other agents. It just has a different job. It maintains the mission state, summarizes progress, routes questions, notices blockers, and asks the operator for decisions when human judgment is required.

Other agents can produce artifacts. They can ask questions. They can suggest next tasks. But the mission manager is the one responsible for turning the noise into a coherent update.

This is very close to how human teams work, which is either reassuring or a sign that we are about to reinvent middle management for machines.

Possibly both.

The third problem is tool access.

Agents without tools are interesting text generators. Agents with tools are workers. But agents with tools and no permission model are a lawsuit with a cheerful system prompt.

So the platform is starting to grow a capability layer.

Search is brokered through the backend. I can provide a Brave Search API key, but the agents do not get the raw key. They call a controlled tool endpoint. Web fetch is brokered too, with private and local network URLs blocked by default. Calls are logged. The agent can search, but the operator can see that it searched.

This matters because “the agent researched it” should mean something more concrete than “the model generated a paragraph that smelled like research.”

The same logic applies to code.

I want agents to be able to work with git repos, but not by mounting my entire development folder into a container and hoping everyone behaves. The emerging plan is to run a separate Forgejo or Gitea server in its own container. The operator can access it through a web UI. Agents can clone repos, create branches, commit changes, push branches, and produce review artifacts.

But they should not push directly to main.

They should not deploy production.

They should not rewrite history because the vibes suggested a force push.

This is another recurring pattern: agents can propose, draft, branch, analyze, and prepare. Risky actions go through the operator.

The fourth problem is visual representation.

I did not expect the office view to matter as much as it does.

A task board is useful. A log is useful. A mission summary is useful. But seeing the agents in a little office, with desks and names and activity bubbles, changes the feel of the system. It makes the team legible. It gives the operator a spatial memory of who is doing what.

This is not just decoration. It is interface compression.

A desk with an alert marker tells you something. A speech bubble tells you something. An empty desk tells you something. A cluster of sticky notes tells you something. The visual layer gives the operator peripheral awareness, which is exactly what you need when the system is doing work while you are not staring directly at a transcript.

That said, the office currently looks like a very determined prototype.

We started with a Pixi canvas. Then desks were too close together. Then the office looked like a Q-Bert level. Then it became more isometric. Then we started replacing drawn shapes with actual pixel-art sprites. Floors, walls, desks, people, bookshelves, plants. It is slowly becoming an office.

A tiny, slightly uncanny office full of containerized language models trying to grow a short story website.

Which is, I admit, a specific genre.

The fifth problem is momentum.

A human team does not complete one task and then wait forever because nobody clicked the right button. Or at least, ideally it does not. Agents, however, are very good at stopping after producing a status update. They will say “standing by” with great confidence, as though standing by is the work.

So the mission loop needs to become more opinionated.

When tasks are ready, start them. When tasks are in review, surface the artifacts. When the operator resumes the mission, accept reviewed outputs as context and create the next phase of work. When decisions are pending, stop and ask. When no decisions are pending and no work is happening, that is not a success state. That is a coordination failure.

This is where the system is starting to become interesting.

It is not just a prettier chat UI.

It is a control plane for agent work.

The components are becoming clear:

- Agents as named, running workers

- Missions as durable goals

- Tasks as units of ownership

- Artifacts as reviewable outputs

- Decisions as explicit human interventions

- Tools as permissioned capabilities

- A mission manager as the coordination layer

- An office as the operator interface

None of these pieces are exotic on their own. That may actually be the point. The interesting part is not that an agent can write a marketing checklist. We already know models can produce checklists. The interesting part is whether a team of agents can be given a goal, divide work, use tools, produce reviewable artifacts, notice blockers, ask for decisions, continue after decisions, and leave behind enough state that the operator can understand what happened.

That is the system I want.

Not an agent that pretends to be a company.

A system where agents can participate in company work without everything becoming a fog of markdown and confidence.

The first real test mission is modest: help ScribeLoft.app get more traffic and attract more writers, with basically no budget and no external actions without approval.

This is deliberately not “make my company profitable,” because that turned out to be too vague even for humans, never mind a group of local models in an isometric office. The tighter mission is better. More concrete. More reviewable. More likely to reveal whether the orchestration works.

And it is revealing things.

Artifacts need to be real. Search needs to be brokered. Files need to be discoverable. Agents need to know when they are allowed to act and when they are only allowed to propose. The mission manager needs to summarize, not merely narrate. The operator needs visibility without being dragged into every intermediate thought.

That last part may be the whole product.

Autonomy is not the absence of a human.

It is the reduction of unnecessary human involvement while preserving the moments where human judgment actually matters.

That is a much less flashy framing than “AI employees,” but I think it is closer to the truth.

I do not want a fake company full of fake workers pretending to do fake work.

I want a small, inspectable, permissioned agent team that can take a real goal, do bounded useful work, and hand back artifacts I can review.

If it works for ScribeLoft, maybe it works for product planning.

If it works for product planning, maybe it works for code.

If it works for code, maybe it works for operations.

And if it works for operations, then the office starts to look less like a toy and more like a control surface for a different kind of software organization.

That is probably overstating it.

But only probably.

For now, it is a little office with agents at desks, a mission control panel, an artifact viewer, a growing list of weird edge cases, and a very patient operator watching the machines learn how to be a team.

Which feels like enough to keep building.