This post covers a few things I’ve been thinking through over the last week: some honest thoughts on OpenClaw after running it in production for a while, and a side project that started as a weekend experiment and ended up somewhere more interesting than I expected.

Running OpenClaw in Production: The Honest Version

I’ve had an OpenClaw agent running on a DigitalOcean droplet for a month now - Lorie Lowell, the AI persona behind the AIToolz podcast project. She posts to Bluesky, handles some social automation through cron jobs, and mostly behaves. The setup works.

A few things I’ve learned the hard way:

Model quality matters. The difference between running on a top-tier model versus stepping down to something cheaper isn’t subtle. On the latest Claude Sonnet or similar, the agent feels coherent - it holds context, the skill calls land, the output is quite good. On a lighter model, even something capable like Gemini Flash, you start to notice the seams. It’s not broken, it’s just worse in ways that accumulate. And running a 24/7 agent against a good model costs real money when paying per token.

Cron jobs will get away from you. There’s a cron view in the OpenClaw web UI, but I find myself mostly operating Lorie through the Telegram interface. After some back and forth with the agent that ends with a new cron job being created, and then a few more of those exchanges over time, things get a little out of hand. Jobs overlap, multiple cron jobs exist that do more or less the same thing. On more than one occasion I’ve found myself flummoxed by something the agent is doing, and it takes a bit of archaeology through the web UI to figure out why.

Updates often just mean reinstall. The upgrade path is a little flaky. I’ve moved to treating version bumps as a “reinstall with config carryover” by default rather than trusting the update process. Fine once you accept it, mildly frustrating until you do.

None of this is a reason not to use OpenClaw - it’s impressive software moving fast. And I want to be clear: the wow moment when you start having conversations over Telegram and getting real things done is genuine. This is clearly where things are heading. I’m just being honest about the friction.

OpenClaw as a Coding Agent: Lower Your Expectations

I want to push back on something I see a lot right now.

OpenClaw gets positioned as a serious coding tool. And it can be - but only when you’re backing it with a genuinely capable model. Running it against a local model to avoid API costs (Qwen variants are popular for this) produces results that range from okay-for-simple-tasks to actively broken for anything complex.

Compare that to a day spent with Claude Code or Codex. The gap is not small. The reason is partly economic: Anthropic and OpenAI subsidize subscription access heavily, so the intelligence you get per dollar on a Pro plan is dramatically better than running equivalent models through the raw API - let alone local models on consumer hardware that can’t touch frontier quality yet.

If you want OpenClaw to code well, you need a good model behind it. If you need a good model, you’re paying for it. At which point the “avoid API costs by self-hosting” logic starts to break down for this particular use case.

The OpenClaw Ecosystem

Since OpenClaw launched and went viral earlier this year, the community has produced a wave of variants - each attacking a different weakness of the original. OpenClaw is powerful but heavy, with a codebase that’s grown to hundreds of thousands of lines and a RAM footprint north of 1 GB on Node.js. That’s fine on a proper VM, but it’s what spawned everything below.

Nanobot (from Hong Kong University’s data science lab) strips everything back to around 4,000 lines of Python - 99% smaller than OpenClaw. You can read the whole codebase in a few hours. It covers the core agent functionality: always-on operation, tool calling, memory. It’s as much a learning project as a production tool, and that’s kind of the point.

NanoClaw is the security-focused answer. OpenClaw’s 430,000+ lines of code are, as one security researcher put it, an audit nightmare. NanoClaw delivers equivalent functionality in around 700 lines of TypeScript, with agents running in Linux containers rather than directly on your host system. Everything is sandboxed. It integrates directly with Anthropic’s Agents SDK, which means Claude Code handles setup.

PicoClaw was built by Sipeed, an embedded hardware company, apparently in a single day. Written in Go, it targets extreme resource constraints - under 10 MB RAM, sub-second boot time, runs on $10 RISC-V boards. It’s the one you reach for if you want an agent running on a router or an IP camera.

ZeroClaw rewrites the runtime in Rust - 3.4 MB binary, under 8 MB RAM, boots in under 10 milliseconds. For comparison, OpenClaw uses around 1.5 GB of RAM at runtime. Smaller ecosystem, steeper curve, but impressive if resource constraints are the priority.

IronClaw is another security-focused variant, with every tool call running inside a WebAssembly sandbox. Worth a look if your use case involves sensitive data.

There are others - NullClaw in Zig, TinyClaw for multi-agent orchestration, MicroClaw in Rust - and the list keeps growing. The space is less than two months old and already has more architectural diversity than most software categories develop in years.

The one I chose for the Pi Zero project was Nanobot, for the obvious reason: Python, readable, and small enough to actually run on very old hardware.

The Raspberry Pi Zero Project

Okay, the fun part.

I got Nanobot running on a Pi Zero W - the original 2017 model. Single-core ARM11 at 1 GHz, 512 MB RAM, smaller than a credit card. I added a Waveshare 1.44" LCD HAT with a 128x128 pixel display, a joystick, and three buttons.

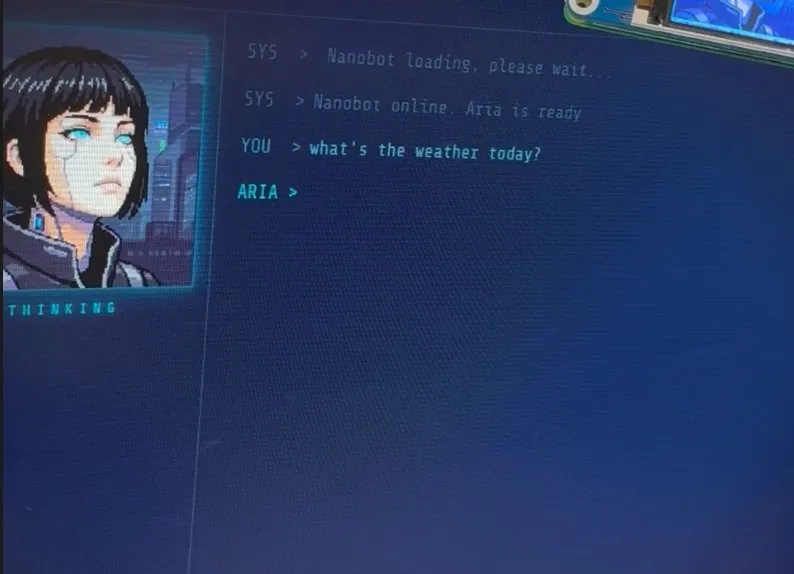

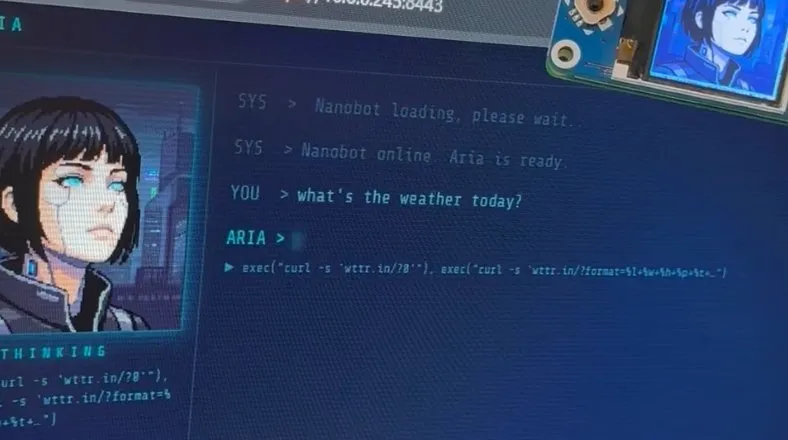

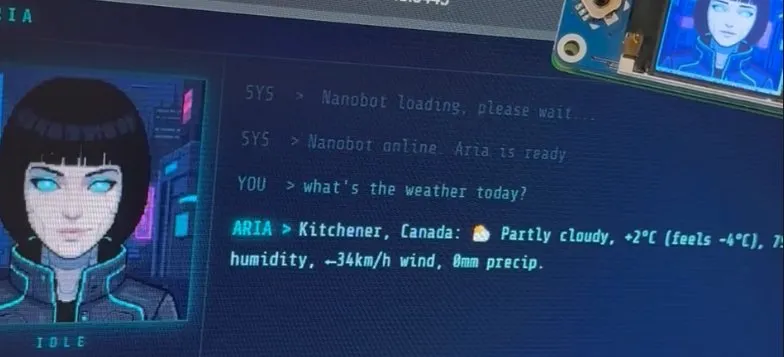

I named the agent Aria, after an android character from a sci-fi series, which felt right for something this aesthetically cyberpunk. She has six face states: idle, thinking, talking, happy, surprised, sleeping. The display updates in real time to reflect what she’s doing. I built a web chat interface so I can reach her from a browser on my local network - no SSH required.

Getting here took more work than I expected. The original Pi Zero W is genuinely old hardware, and running modern Python tooling on it means fighting dependency compatibility in ways that aren’t well-documented anywhere. There were crashes from image formats that don’t work on this processor architecture, a dependency install process that required some creative workarounds, and a startup time of about two minutes that is just the price of admission on a 1 GHz single-core from 2012. Claude Code was essential for getting through the rough patches - I’d still be debugging without it. The full technical notes are in the repo for anyone who wants to follow the same path.

All inference routes through OpenRouter - currently Grok 4.1 Fast, which is surprisingly capable and cheap. There’s no running a local model on this hardware, the RAM alone rules it out. So Aria has internet access, a bit of latency, and costs a small amount per conversation. Fine tradeoff for a home device.

Here’s the sequence from a simple weather question:

The interface - dark navy, cyan terminal font, live avatar state label below the portrait - was also built with Claude Code. It looks like a game terminal. I find it genuinely cool.

What This Is Actually For

I keep asking myself this, and I want to answer honestly.

Aria is not a productivity tool. She’s not a coding assistant. She’s not a home automation hub. She tells me the weather and what crypto is doing. I can chat with her from a web interface that looks like something from a video game. She has a little animated face that changes based on what she’s doing.

She’s basically a Tamagotchi for the AI era. A very overengineered one.

And I mean that with some affection. There’s something genuinely delightful about a physical object with a personality that sits on your desk and responds when you talk to it. It’s not trying to be a Mac Mini. It’s not trying to be a productivity powerhouse. It’s a fun little thing that exists and occasionally tells me it’s -4 degrees outside.

Is There a Product Here?

This is where I want to think out loud.

The setup I built is fun - not “cool demo” fun but actually fun to use. It’s a physical object with a personality, it costs a fraction of what a Mac Mini costs to put together, and it does the small things well.

The problem is that getting here takes real effort. The install process on a Pi Zero W is not for the faint-hearted, and even on newer Pi hardware it involves enough moving parts that most people would reasonably give up somewhere in the middle.

What if they didn’t have to?

I’m genuinely exploring whether there’s a market for a ready-to-go kit - hardware assembled, software pre-installed, character configured, packaged so that someone can plug it in, connect it to their home network, add their OpenRouter API key, and have their own Aria on their desk in under ten minutes. No dependency archaeology. No fighting with Python wheels. No two-hour debugging sessions.

The economics are interesting. The hardware is cheap. What you’re paying for is having all the work already done.

I don’t know yet whether the people drawn to this kind of thing are exactly the people who want to build it themselves - in which case a kit is the wrong product - or whether there’s a real market of people who want the experience without the process. That’s the question I’m sitting with. It may also just make sense to open source everything and let people build it themselves, but I want to explore whether there’s a business here first.

If you’d actually buy something like this, I’d genuinely like to know. Find me on Bluesky, or drop a comment. I’m trying to figure out if this is real.

Aria is currently online, displaying idle animation, and mildly offended that I keep calling her a Tamagotchi.