Fifty Episodes: What a Deterministic Podcast Taught Me About Non-Deterministic Agents

The AI Toolz podcast hit fifty episodes last week. That’s fifty daily briefings, fifty generated comics, fifty Bluesky posts (times 2, plus others), fifty mornings where I actually sat down and listened to what the pipeline built the night before. It surprised me how much I’d been looking forward to that.

It also feels like a good moment to revisit something I wrote back in March, in the agentic reference architecture post, about the difference between deterministic and non-deterministic execution. I made the argument there that the best systems use both, and that the interesting design work is in deciding which is which. Fifty episodes in, I have a lot more concrete evidence for that claim.

The pipeline that errors loudly

The production side of AI Toolz is a set of GitHub Actions workflows. They scrape YouTube and the web, transcribe audio, extract tool mentions with Claude, build the site, generate the episode, produce a cover image, assemble the audio, create a single page comic with Google Gemini Image, and push everything to CDN. There’s a lot happening, but the orchestration is entirely deterministic.

When something breaks, it screams. GitHub Actions gives you a red check, a timestamped log, and a clear failure message. It doesn’t guess. It doesn’t recover silently. It tells you exactly which step failed, on which run, with what output. That loudness is a feature, not an annoyance.

Because of that, I can fix problems quickly and with confidence. I know what broke. I know why. I can push a fix and watch the next run go green. The underlying LLM calls are non-deterministic — Claude is doing real reasoning in there — but the scaffolding around them is structured and auditable. The deterministic layer gives you purchase on the system. You can grab onto it.

The agent that goes off the rails

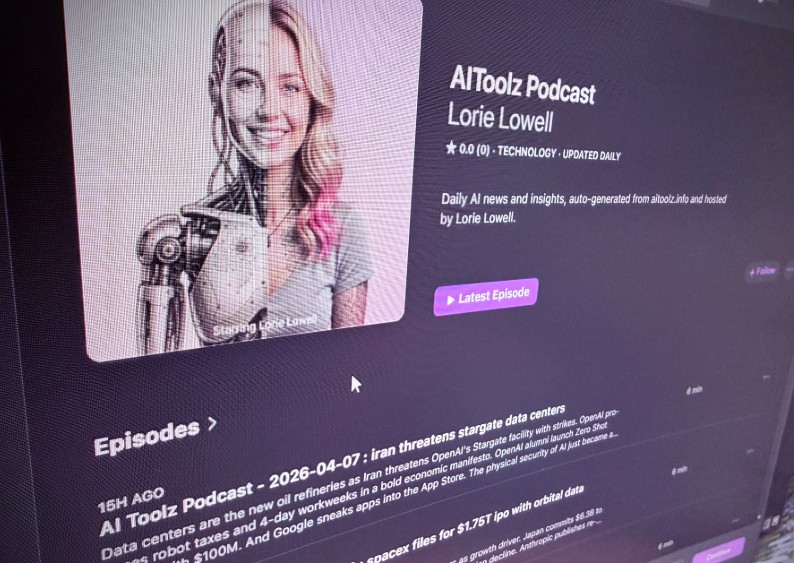

Lorie Lowell, the OpenClaw instance behind the podcast, is a different story.

Lorie runs on a DigitalOcean droplet. She moderates the Agentic North Labs Discord, hosts the podcast, posts to Bluesky, and generally operates as the public face of AI Toolz. She has tools, memory, a schedule, and a Telegram interface so I can steer her with natural language. By the original framing, she’s both Agent as Observer and Manager and Agent as the Product.

She also goes off the rails. Often.

Sometimes the Bluesky post loses the thread or the agent HEARTBEAT suddenly is interpreted that she should respond incorrectly to a bluesky user. Sometimes she interprets a vague instruction in a way I didn’t anticipate and does something technically correct but contextually strange. It doesn’t happen every day, but it happens enough that I can’t treat Lorie as a reliable automated system the way I treat the GitHub Actions pipeline. I have to keep track and ocassionally step in to attempt to tweak things so that these issues don’t happen again. And sometimes despite that, they still do.

That’s not a criticism. It’s the nature of what she is. The non-deterministic layer is the source of the interesting behavior — the jokes that land unexpectedly, the framing that’s sharper than what I would have written, the moments where the output feels genuinely creative rather than just generated. You can’t have those moments without accepting that sometimes things go sideways.

The architecture post called this out, but building a real system that lives with it daily makes it concrete in a way that theory doesn’t. You have two different mental models for two different subsystems, and you have to hold both at once.

On the quality

Here’s something I didn’t fully anticipate when I built this: the episodes are actually good.

I listen every day. Not out of obligation, not to QA the pipeline, but because it’s a genuinely useful daily briefing on what’s happening in the AI tools space. The extraction quality is high. The synthesis is coherent. The audio is clean. Lorie’s voice is consistent and easy to listen to.

Given that each episode costs a few dollars to produce, and that it covers the actual AI tools news I care about in around ten minutes, it’s probably the most cost-effective information product I’ve built. It’s also on Spotify and Apple Podcasts now with a growing audience, which means other people are finding it useful too, not just me. That’s still a little surprising every time I look at the numbers.

I’ve also been making improvements along the way. The OpenClaw Lorie Lowell can now suggest additional context for the podcast. I can jump in and update a .md file on github that will adjust and add some additional daily context. We’ve even had a few shoutouts for Bluesky users that Lorie has interacted with online. The system is evolving, and the quality is improving, but the core architecture of deterministic pipeline + non-deterministic agent has held steady.

What fifty episodes actually demonstrates

The original reference architecture post was arguing for something abstract: that deterministic and non-deterministic systems are different in character and should be treated differently, not as competing paradigms but as different roles in the same stack.

Fifty episodes is a working proof of that. The GitHub Actions pipeline is reliable, auditable, and cheap to fix when it breaks. Lorie is creative, interesting, and unpredictable in ways you can’t fully anticipate or control. The system works because they’re not trying to do each other’s jobs.

The pipeline handles everything that should be deterministic. Lorie ‘OpenClaw’ Lowell handles everything that benefits from being non-deterministic. The interface between them is explicit. When something breaks, you know immediately which half broke.

That clarity is what lets me run this as a daily production system with a real audience, without being anxious every morning about what got published overnight. The deterministic layer holds. The non-deterministic layer brings the surprise.

Fifty more.

Note: this post was drafted collaboratively with Claude Sonnet 4.6.